A photo is just one moment. But an AI kiss video is a full sequence of continuous moments.

An AI kissing video generator needs to reasonably predict and fill in the intermediate images that did not originally exist. It is not “editing the original photo,” but “generating a series of new frames.”

So AI is not turning a photo into a video out of thin air.

To keep the faces consistent from beginning to end, it first needs to recognize the faces and poses, then predict the motion, generate the frames one by one, and finally stitch them together into a complete kissing scene.

This guide will clearly explain the core principles and technical logic behind AI kissing videos in simple, easy-to-understand terms. Keep reading, and you’ll get it by the end.

What AI Kissing Generators Actually Do

At the user level, an AI kissing generator feels simple. You upload one or two photos, wait a few seconds, and get back a short clip where two people lean in and kiss. But technically, this is much closer to video generation than to ordinary photo editing.

A traditional editor can only modify pixels that already exist. An AI kissing tool has to do much more than that. It has to understand who is in the image, imagine how they could move, and generate the missing visual information needed to turn one frozen frame into a sequence.

That is why AI kissing works as a form of generated motion rather than as a hidden “effect” already buried inside the photo. It is a mix of image understanding, motion generation, identity preservation, and video synthesis.

Core Technology Behind AI Kissing Video Tools

1. Video Diffusion Models

This technology is responsible for “turning a still photo into a moving video.” It does not simply add a few frames. Instead, it generates the entire motion sequence frame by frame as a video.

2. Identity Preservation

This technology is responsible for “making sure the generated person still looks like the original person.” It captures facial and appearance features from the reference photo and tries to prevent the person from looking less and less like the original during video generation.

3. Motion and Expression Control

This technology is responsible for “deciding how the people move.” For example, how two people move closer, how they turn their heads, and when they close their eyes are usually guided by pose signals, keypoints, or motion sequences.

4. Temporal Consistency

This technology is responsible for “keeping the whole video consistent from beginning to end.” Without it, the video is more likely to flicker, shake, or show unstable facial features. With it, consecutive frames can stay more stable and look more like real footage.

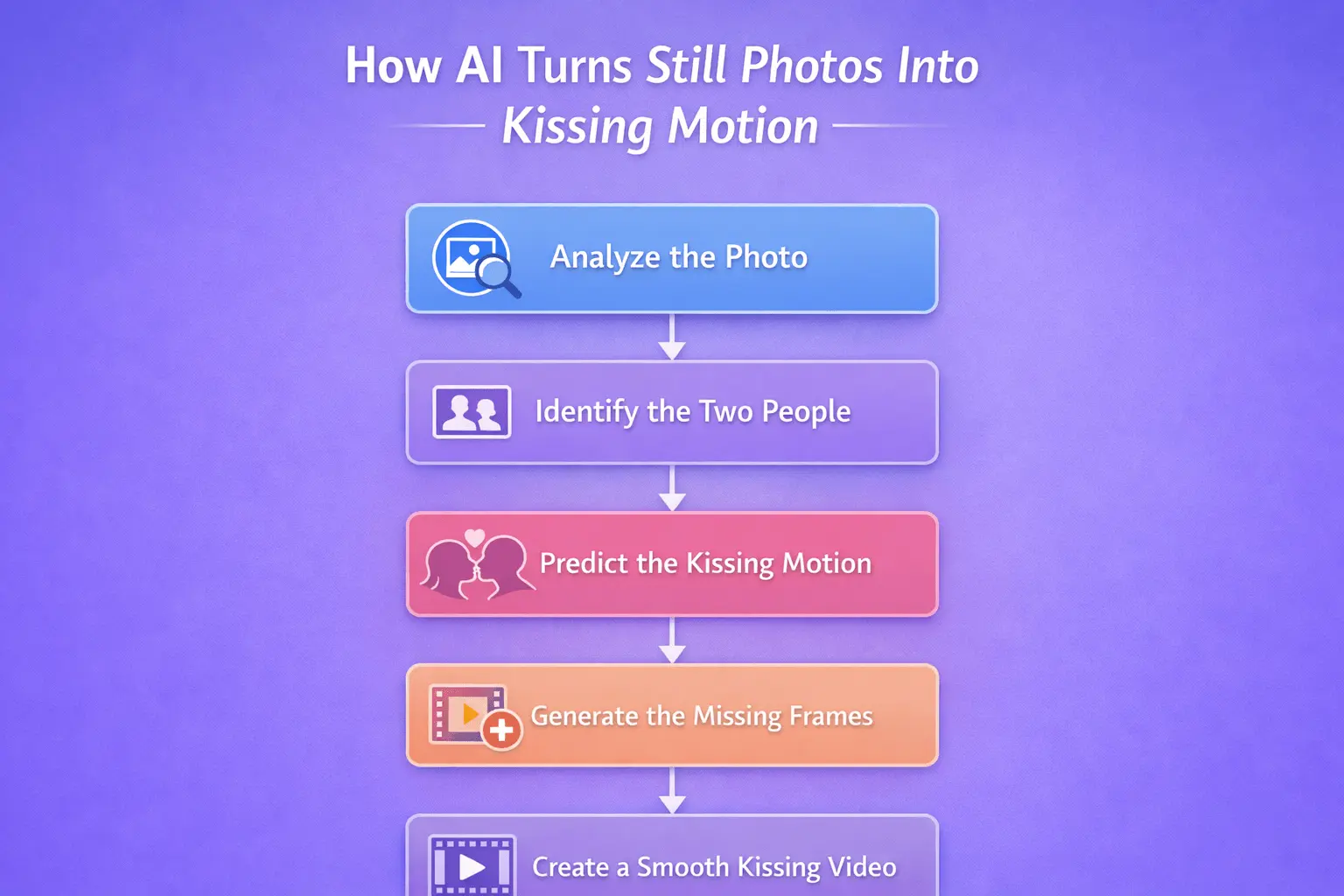

How AI Turns Still Photos Into Kissing Motion

Understand What’s in the Photo

The first job is understanding the photo itself. Before an AI model can animate anything, it has to work out who is in the image, where each face is, how the heads are angled, what the facial structure looks like, and how the two people are positioned relative to each other.

This is why a good ai kiss generator does not simply “look at the whole picture and guess.” It first builds an internal understanding of the people in the frame. If the faces are clear, the angles are readable, and the subjects are well separated from the background, the model has a much better starting point for animation.

Preserve Who the Two People Are

A kiss video only works if the two people still look like themselves.

That sounds obvious, but it is one of the hardest parts of the whole pipeline. As soon as the face shape shifts too much, the eyes drift, or the features stop matching the original image, the illusion breaks.

The better the AI kiss tool can preserve facial structure, hair shape, face contour, and other identity cues, the more convincing the result will be.

Predict How the Kiss Should Happen

A still photo has no motion inside it. So the AI has to predict what a plausible kissing motion would look like.

The AI is building a mini kiss timeline. First, the faces are apart, then closer, then nearly touching, then touching. If the system does this well, your brain reads the result as a natural kiss rather than a slideshow of disconnected images.

Generate the Missing In-Between Frames

Research on image-to-video models makes this explicit: the model takes a reference image and produces a sequence of frames that preserve the scene while adding motion over time.

They take a reference image and synthesize multiple new frames while trying to preserve the scene and animate it over time.

Turn It Into a Smooth Video

Once those new frames exist, they still need to work together as one continuous clip.

That final step is all about smoothness. The pacing has to feel even, the transitions have to feel natural, and the motion has to play as one connected moment rather than a series of separate images. A technically correct sequence can still feel wrong if the flow is too sharp, too jumpy, or too uneven.

That is really how AI turns still photos into kissing motion. It first understands the image, identifies the two people, predicts how the kiss should unfold, generates the missing frames, and then blends everything into a smooth video.

Why do AI kissing videos sometimes look unnatural?

Some results look soft, smooth, and surprisingly believable. Others look strange almost immediately. The reason usually comes down to how hard the original input makes the generation task.

Clear faces help. So do natural lighting, readable head angles, and minimal obstructions. The harder it is for the model to understand the subjects, the more it has to guess.

A kissing scene is especially demanding because the motion is subtle and close-range. Mouth movement, facial contact, partial occlusion, and tiny changes in angle all matter. Humans are very sensitive to errors in faces, so even a small problem becomes obvious. Research in this area repeatedly highlights identity drift, occlusion handling, and temporal instability as core challenges, which helps explain why close-contact scenes can be harder than simpler animations.

How AI Creates a Kiss from Two Separate Photos

Using one couple photo is already a complex task. Creating an AI kiss from two separate photos is even harder.

Now the AI model has to combine two different identities, two different lighting conditions, two different face angles, and sometimes two entirely different compositions into one believable motion sequence.

Even maintaining one consistent subject across time is a challenge. Extending that to two separate people naturally raises the difficulty. That is also why two-photo ai kissing workflows usually perform best when the source images already feel compatible, with similar framing, lighting, and facial visibility.

Bring Romantic Moments to Life with Lanta AI

Lanta AI — an easy way to turn still photos into believable AI kissing moments. If you want to see how an AI French kiss can be created from a single image or two separate photos, try Lanta AI and create your own video in just a few clicks.